The prospector will add an extra application field to the Elastic documents generated, and it will also tag them.Īlso, the output configuration is fairly simple: just ship all logging to Elastic.

Harvesters are the components that keep track of a single file, opening and closing it and processing data in it. In this example, we see one prospector, a process that manages one or more harvesters. This means the configuration file is relatively simple:įilebeat.prospectors: - input_type: log paths: - /var/log/nginx/*.log fields: application: nginx tags: output.elasticsearch: hosts: pipeline: "general" pipelines: - pipeline: "nginx-access" ntains: tags: "access" privacy sensitive info), you might not want to ship it to Elastic even though that will clean the privacy-related info.īut for other situations, Filebeat might well be a viable alternative, for example because it’s more light-weight than Logstash.Īs said, Filebeats approach is different: it will keep track of what parts of a log file have been processed so far, and detect changes to that file (because your application genereted new logging, for example). If, for some reason, your log files contain information that is not allowed to leave the system (e.g. Whether or not this is a good solution will probably depend on your context. When it comes to getting your logging data into Elastic, I met a new friend: Filebeat.įilebeat follows an approach different from Logstash: the latter does all processing locally, where you have the raw logging data, whereas the former is relatively dumb and just ships all logging to Elastic, relying on Elastic to do processing, cleaning and the like.

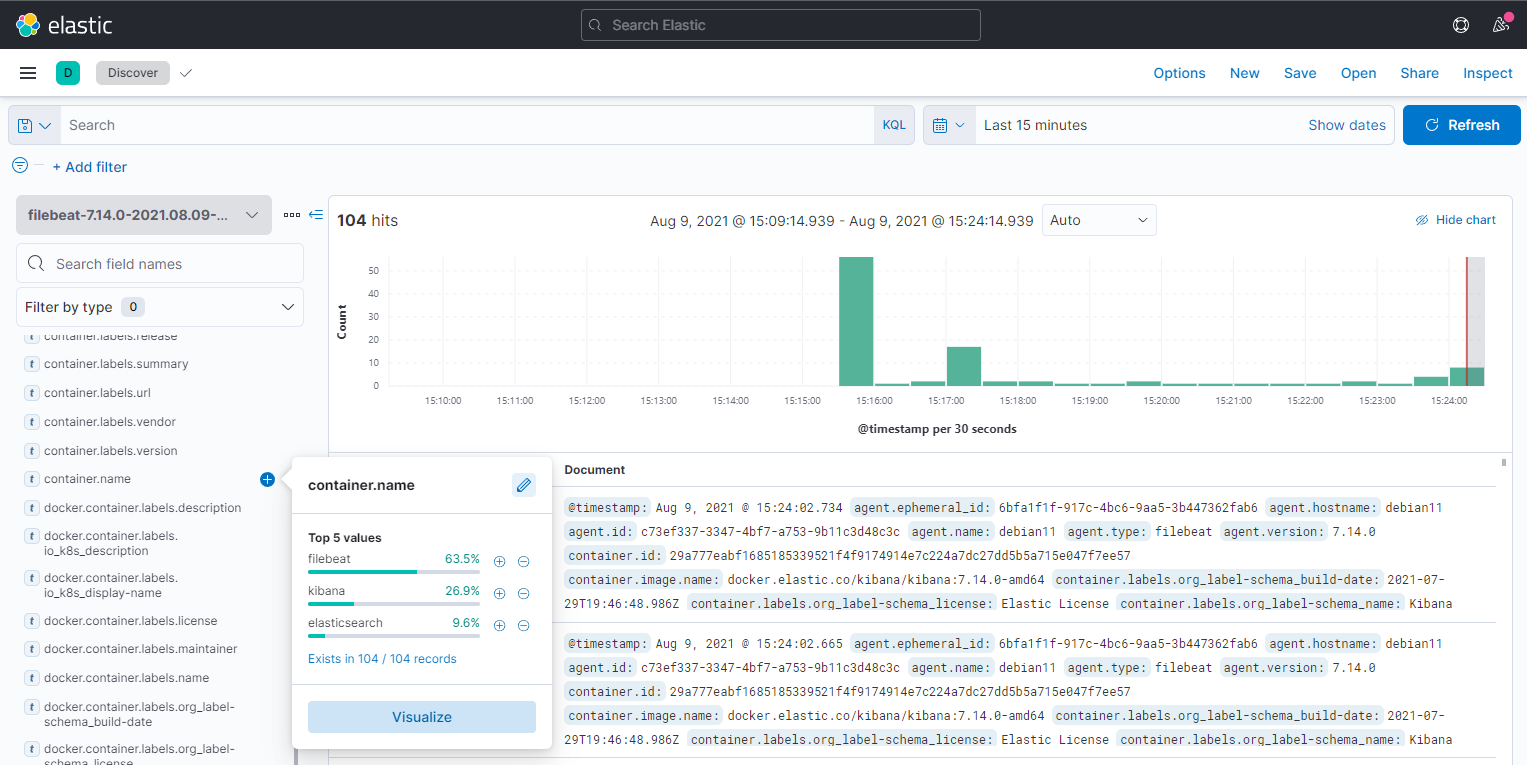

What I quite liked was the query editor that you can use as a simple way to interact with Elastic.Ī bit like Postman or similar tools, but simpler, since it is only meant to communicate with Elastic. Kibana is now also at a 5.x version, since it is versioned together with Elastic.įrom an end-user perspective it might seem that nothing changed that much, at first glance.īut I guess under the hood a lot has changed.Īt least it is now deployed in a different way, since it comes with its own web server. Grok incremental contructor - a kind of wizard to help you detect and construct pattern.Grok matcher - tests whether certain inputs on a pattern gives you the right output.Writing these patterns is often a trial-and-error process.īut don’t worry, there are two nice online tools to help you with that: If SEMANTIC is omitted, the text that matched the pattern will not be included as a field in the target document. This example says “there’s a set of chars that matches the NUMBER pattern store it in the bytes field of the target document with type int”. "patterns": [ "%, but SEMANTIC and TYPE can be omitted. "description" : "nginx access logs pipeline", Let’s look at a simple example that processes lines from an nginx access log. It will output the documents that you supplied after they have been transformed by the pipeline, but the documents will not be stored in Elasticsearch. This error handling can be per-processor or at the global level of your pipeline.Īfter defining the pipeline, you often want to test it.Įlastic offers you an endpoint to do that, called the Simulate API.įirst, store the pipeline in Kibana (using PUT _ingest/pipeline/my-pipeline-id), and then simulate an invocation of it using POST _ingest/pipeline/_simulate. Of course, error handling is included, too, meaning you can define what should be done when some of the steps fail. Processors can be chained to form very complex pipelines that will eventually perform complex transformations. There are plenty of processors, varying from simple things like adding a field to a document to complex stuff like extracting structured fields a single text field, extracting key/value-pairs or parsing JSON. Processing takes place in pipelines which consists of processors. Using Ingest Node, you can pre-process documents before they are actually stored in Elasticsearch. Quite a big change actually happened with the 5.x version: Ingest Node. To start with Elasticsearch: it has updated from 1.4 that I used before to 5.x to date. Recently, I was asked by another team in my company to assist them in setting it up for their team.Ī good chance to catch up with some old friends, and see how they have changed over the years.

It was fun to do and very instructive afterwards, I wrote an article about my experiences and spoke at various conferences. A few years ago, I had an assignment at my former client involving Elasticsearch, Logstash and Kibana to build an operational dashboard.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed